Every so often we experience a network outage because a piece of equipment fails. One switch we use across our campus has a power supply failure mode that trips the power, so one bad switch takes out everything. However, most of time time I’m impressed at just how resilient and reliable the kit is. Network switches in dirty, hot environments run reliably for years. In one case we had a switch with long since failed fans, in a room that used to reach 40°C. It finally fell over one hot summer’s day when the temperature hit 43°C. Even then it was ok once it had cooled down.

Most recently there was a water leak in a building. I say leak, a pressure vessel burst so mains pressure hot water poured through two floors of the building for a couple of hours.

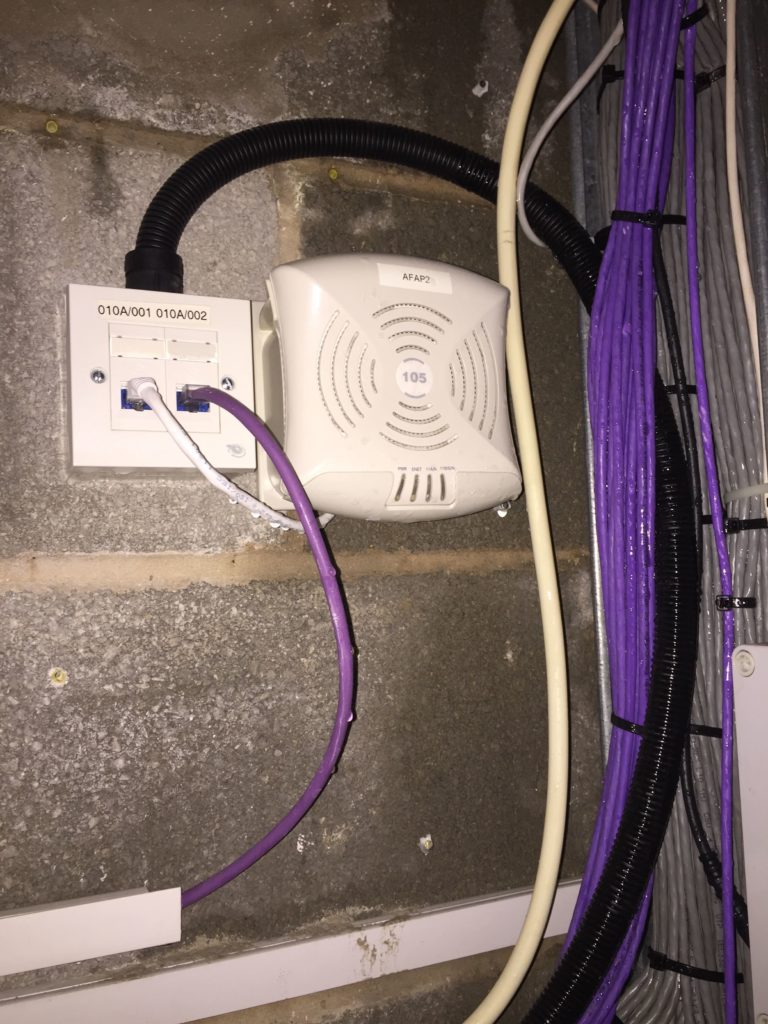

Let’s not reflect on the building design that places active network equipment and the building power distribution boards next to the questionable plumbing but instead consider the life of this poor AP-105.

Happily serving clients for the past seven or eight years, it was time for a shower. It died. Not surprising. What’s perhaps more surprising is once dried out the AP functioned perfectly well.

This isn’t the first time water damage has been a problem for us. Investigating a user complaint with a colleague once we found a switch subject to such a long term water leak it had limescale deposits across the front, the pins in the sockets had corroded. It was in a sad way but even though the cabinet resembled Mother Shipton’s cave, the switch was still online.

I have seen network equipment from Cisco, HP, Aruba, Ubiquiti, Extreme, all subject to quite serious abuse in conditions that are far outside the environmental specifications.

This isn’t to suggest we should be cavalier in our attitude towards deployment conditions – rather to celebrate the level of quality and reliability that’s achieved in the design and manufacturing of the equipment we use.